GSA SER Verified Lists Vs Scraping

The Core of Automated Link Building: Understanding Your Target Sources

In the world of search engine optimization, GSA Search Engine Ranker remains one of the most aggressive and scalable tools for building backlinks. Its power, however, is entirely dependent on the quality and source of the targets you feed it. This brings us to a critical fork in the road that every user eventually faces: the debate between using pre-made verified lists and relying on the software’s native scraping engines. The question of GSA SER verified lists vs scraping is not just about convenience; it is a fundamental choice that dictates your success rate, domain diversity, and ultimately, the safety of your link profile.

What Are GSA SER Verified Lists?

Verified lists are pre-curated collections of URLs where GSA SER has already confirmed it can successfully register and post a backlink. These lists are typically compiled by experienced users and sold or shared across communities. The primary appeal is instant efficiency. Instead of burning CPU cycles and proxies on hunting for targets that might not work, you load a list and the software immediately starts posting to sites that are known to be functional. This method offers a plug-and-play solution that completely bypasses the searching phase.

The Advantages of Using Verified Lists

The immediate benefit of verified lists is speed. Your submissions per minute can skyrocket because the software skips the lengthy process of identifying footprints, testing platforms, and checking if registration is possible. It also provides a level of predictability; you generally know you are posting to a certain category of sites, be it WordPress comments, Joomla articles, or guestbooks. For newcomers, this removes the steep learning curve of configuring platform-specific search queries properly.

The Hidden Dangers and Limitations

However, reliance on verified lists comes with significant baggage. The most obvious problem is the "public toilet" effect. If a list is sold cheaply to thousands of users, those target URLs become absolutely spammed to death. Links from these domains lose value rapidly and are more likely to trigger algorithmic penalties. Furthermore, because the targets are static, you are not discovering new domains, which signals an unnatural link velocity to search engines. A list is a snapshot in time—many URLs will be dead, de-indexed, or blocked within days.

The Mechanics of Scraping with GSA SER

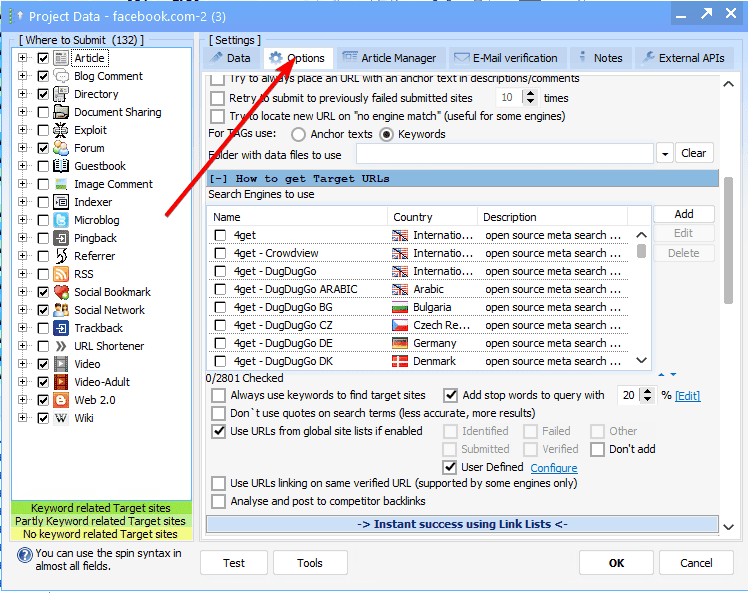

Scraping, on the other hand, is the dynamic method where GSA SER harvests fresh targets directly from search engines like Google, Bing, and Yahoo based on your chosen footprints. The software queries search engines using specific keywords and platform identifiers, parses the results, and analyzes each URL to see if it’s a viable posting location. When evaluating GSA SER verified lists vs scraping, you are essentially comparing a stagnant pond to a flowing river of data.

The Power of Real-Time Discovery

The greatest strength of scraping is immediacy and uniqueness. You are finding targets that have never been touched by another GSA user, which means a much higher trust factor for those domains before they get spammed. Scraping allows you to build a truly diverse link profile that mimics natural growth, pulling from a massive variety of IPs, name servers, and TLDs. It is the only way to scale a custom project without leaving a heavy, repetitive footprint that can be traced back to a single commercial list.

The Resource Cost of Scraping

This treasure hunt is not free. Effective scraping demands high-quality, private proxies with Google-passing capabilities. Public proxies are useless here, as search engines will block them instantly. You will need a robust server with ample RAM and bandwidth. The CPU usage is massively higher than list posting, and it requires careful tuning of your search engine delay settings to avoid wasting resources on "no results" errors. In the GSA SER verified lists vs scraping equation, scraping represents a higher operational cost and a more complex setup.

Comparing Success Rates and Link Quality

When it comes to raw verified click-through, a good list will often show a higher percentage of "Verified" entries in the log initially for simple platforms. But a skilled scraper configured with premium proxies will eventually surpass it with volume and, more importantly, with link quality. A list largely offers low-tier platforms like guestbooks and image comments. Scraping allows you to target the hard-to-find high-value engines, such as educational forums, niche article directories, and complex CMS systems that list vendors often ignore because they are difficult to automate in bulk creation mode.

Footprints and Platform Targeting: A Deeper Look

Scraping gives you surgical control. If you want links only from sites with a specific keyword in the domain URL, a certain OBL (outbound link) count, or a specific country, you can engineer your search footprints to read more find exactly that. Verified lists are a blunt instrument; you get what the list builder found, which is usually optimized for volume, not relevance. The true debate in GSA SER verified lists vs scraping is often a debate about whether you want to be a spammer casting a massive net or a tactician hunting in a focused area.

Creating a Hybrid Workflow for Maximum Efficiency

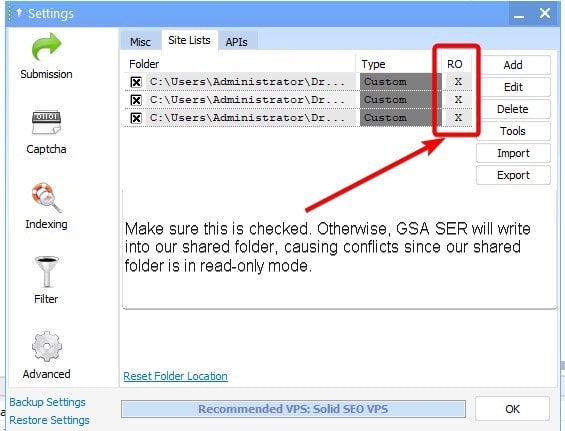

Advanced users rarely choose one side of the argument exclusively. A superior strategy is to blend both methods. You can use scraping to build your own private master lists, verify them once, and then reuse them for lower-tier projects or test runs. Alternatively, you can use cheap verified lists as a background "churn and burn" buffer for low-tier projects, while your main money sites are powered exclusively by freshly scraped, high-trust targets. This hybrid allows you to soak up link volume where it doesn't matter while preserving the integrity of your critical assets.

The Final Verdict

If you are looking for a set-it-and-forget-it solution and you don't care about the longevity of your domains, verified lists provide a low-maintenance entry point. However, if you are building a site you intend to rank and keep for the long haul, scraping is not just an option—it is a necessity. The actual value lies in understanding that GSA SER verified lists vs scraping represents a spectrum of control. The more you scrape, the more unique your link graph becomes, and the less you look like a bot using outdated, over-spammed databases.